(…and everything else, too)

In one of the apologetics presentations I give, I ask the audience to shout out as many logical fallacies as they can in 15 seconds and they usually list about seven. I then ask them to shout out all the psychological biases that they can and I almost say confirmation bias and nothing else. People generally seem more aware of fallacies and correctly recognize them as errors in reasoning, but few people are aware of the huge number of psychological factors that affect our every decision.

When I first started studying psychology and apologetics, I thought that people were rational beings. I quickly discovered that we are not as rational as we think. However, it wasn’t until years of studying bias and experience interacting with people that helped me realize that people are far from rational. Here’s the thing though: we can be rational, but when it comes to topics like religion, politics, or any other emotionally charged topic, it requires a lot of hard work to be rational. We need the patience to withhold premature judgments, we need the courage to confront our emotions and challenge the standard view of our social groups, and we need the humility to admit we might be wrong or ignorant.

Below is a list of all the broadly accepted psychological factors I could find that influence our reasoning, usually in a non-rational way. Most, if not all of these biases are unconscious so we cannot even know if they are affecting us. We know they exist because of clever experiments by psychologists. These are the factors we must overcome when we make decisions and the factors we need to help others avoid when doing apologetics. What’s especially interesting about these is after people are made aware of these factors, they almost always say it didn’t have an effect on them, but the data do not lie.

What’s unique about this list compared to others you might find on the internet is that I use illustrations that present these in the context of apologetics and I’ve cross-referenced each factor with related ones and fallacies.

Please let me know if you think others should be added or if something is unclear. This list is meant to be a reference for myself and anyone else who wants to use it. New terms are in blue.

Aesthetics or Beauty – People tend to be more open and accepting of things that are considering aesthetically appealing. This applies to people and objects and it has a cross-influence between our senses. For instance, a soda will taste better in an appealing can compared to an ugly can.

– Same or nearly the same as

– Related to liking, first impressions, intuition

Affect Heuristic – Then tendency to rely on our current emotions to make quick decisions. Disgust is particularly powerful for moral decisions. When we make decisions based on our emotions, we usually come up with posthoc (after the fact) reasons for our decision.

– Same or nearly the same intuitive cognitive style

– Related to an appeal to emotions

Agreeableness – This is a facet of personality that relates to whether or not we will agree to something. People high in agreeableness are more likely to go along with what others do and say, at least in the moment, although I am not sure if they are actually more likely to change their minds. On the other hand, people low in agreeableness are likely to disagree for little or no reason. Someone low in agreeableness will probably not be much fun to talk to (so don’t be that person) and will likely require more effort when engaging in apologetics with them.

– Same or nearly the same as

– Related to reactance (opposite)Anchoring Effect – When we have a value or representation in our mind, this becomes the standard for which we judge other options, even if it’s arbitrary. In other words, this value or belief becomes your anchor for how you judge other things in relation.

– Same or nearly the same as arbitrary coherence.

– Related to framing and priming.

Apophenia – the tendency to see patterns, meaning, or connections in randomness. Essentially, this is seeing shapes in clouds or finding hidden codes in the Bible. In apologetics, believers are accused of this when they claim there is design in the universe. However, the same critique can be aimed at evolution so both sides need to make a case that they are not falling victim to this bias.

– Same or nearly the same as hyperactive agency detection device (HADD), agenticity, paternicity, the clustering illusion, hot-hand fallacy, Texas sharp shooter fallacy, and pareidolia.

– Related to the false cause fallacy (aka causal fallacy), gambler’s fallacy

Arbitrary Coherence – the tendency to form a coherent view or argument based on an arbitrary value. Once an arbitrary value is accepted, people tend to act coherently based on that value.

– Same or nearly the same as the anchoring effect.

– Related to framing, and priming.

Attribution Errors – An attribution error is any time we blame or credit one thing when it was really a different thing. Usually, this happens because several factors usually go together and we just choose the one that is more apparent to us. This is extremely common in our world. When Christians are unloving, people often attribute this to Christianity rather than the person’s personality, culture, a specific brand of Christianity, potential misunderstandings, their own defense mechanisms, or several other potential factors.

– Same or nearly the same as fundamental attribution error, placebo effect, classical conditioning

– Related to the availability heuristic, the representativeness heuristic

Availability Heuristic – The tendency to make decisions or draw conclusions based on the data that we hear about most often or most recently instead of a systematic comparison of all the data.

– Same or nearly the same as base-rate fallacy.

– Related to the false-consensus effect, cherry-picking (fallacy), selective attention, selective perception

Backfire Effect – When a person moves farther away from a view after hearing an argument for it. This is probably the best explanation for why neither person usually changes their mind when debating religion, politics, or other heated topics.

– Same or nearly the same as belief perseverance and group polarization.

– Related to belief bias, confirmation bias, reactance.

Bandwagon Effect – The tendency to prefer popular options. People might be hesitant to become a committed Christian because they don’t see many other people who are.

– Same or nearly the same as an appeal to the majority.

– Related to the bystander effect, false-consensus effect, individualism (contrasts), mere exposure, reactance (contrasts), optimal distinctiveness.

Base Rate Fallacy – The tendency to ignore the base (average) probability of something occurring in favor of new or readily available information. An example of this is when someone points to a mutation as evidence for evolution but neglects the extremely low average rate of beneficial mutations, especially beneficial mutations that insert new information into the genome.

– Same or nearly the same as the availability heuristic

– Related to the cherry-picking (fallacy), hot-hand fallacy, regression to the mean, representativeness heuristic.

Belief Bias – The tendency to evaluate arguments based on what we already believe rather than the strength of the premises. In other words, to rationalize, ignore, or misunderstand argument that would disprove what we already believe. If we believe a conclusion, then we will deny any premises that do not support our conclusion without careful consideration of them.

– Same or nearly the same as rationalization.

– Related to affirming the consequent (fallacy), belief perseverance, confirmation bias, and straw-man fallacy.

Belief Perseverance – The tendency to maintain a belief even in the face of contrary evidence.

– Same or nearly the same as belief perseverance and group polarization.

– Related to belief bias, confirmation bias

Blindspot Bias or Bias Blindspot – The tendency for people to see themselves as less susceptible to biases than other people. This one is more likely to affect people with high IQ or education. Anecdotally, I’ve noticed that people who have converted or deconverted as an adult tend to be guilty of this by thinking they have transcended bias and no longer need to reevaluate their views.

– Same or nearly the same as “I’m not biased” bias, self-serving bias.

– Related to belief bias, belief perseverance, confirmation bias, intelligence,

Bystander Effect – The tendency to not respond to a situation when there is a crowd of people also not responding. When we see a car on the side of the road, we don’t stop to help because nobody else is stopping to help. This effect happens because we don’t want to stand out, rationalize that we might not be needed, or we actually think we might be wrong and everyone else is right. In apologetics and theology, this is when we see other people accepting sinful behaviors so we don’t try to stop it (if and when we are in the proper role to do so).

– Same or nearly the same as an appeal to the majority (fallacy) or bandwagon fallacy.

– Related to the availability heuristic, false-consensus effect, normalization, the spotlight effect, systematic desensitization.

Classical Conditioning – This is the type of conditioning where a neutral stimulus is paired with a positive or negative stimulus and our brains automatically link the two together so that we come to expect they will go together. This has actually been used to develop superstitions in pigeons (and I think other animals) and is the likely mechanism for sexual fetishes. The key is that this is an automatic process; however, it might show itself through conscious means. Someone with a legitimate superstition probably has very convincing reasons to explain how it works even though everyone else recognizes there is no connection between the superstition and the outcome.

– Same or nearly the same as attribution errors

– Related to superstition, fetishes, misperceptions

Critical Lure – A critical lure relates only to a specific memory test (DRM procedure). In this test, participants are asked to remember as many words as possible out of a series of 20 words. All the words are about the same topic, which is the critical lure. For instance, sleep would be the critical lure for a list with words like nap, rest, bed, slumber, and so on. When doing this test, about half (maybe a little more) of people will say the critical lure was on the list when it really wasn’t. This exercise reveals how memory works and is not as infallible as we often think. I see this principle in practice when people have debates about a subject. They will often attack a claim that the other person hasn’t made. They’ve either assumed it’s what the person believes or they may actually have some memory of the person making the claim. The point is that we should be surprised when people assume we say things we didn’t and on the other side, we should be more careful to remember what other people actually say.

– Same or nearly the same as

– Related to

Cognitive Ease – We are more likely to accept something or like it if it is easy to process. This includes the content, the way the content is presented, and the medium used to present it. Using a clear font when writing, speaking loud enough for people to hear, high-resolution video, and simplifying a complex concept are just a few ways to take advantage of this bias.

– Same or nearly the same as disfluency (opposite)

– Related to mere exposure, optimal distinctiveness

Cognitive Dissonance – The uncomfortable feeling we get when we have inconsistent beliefs or when our actions do not align with our beliefs. When our actions and beliefs are inconsistent, we usually change our minds to align with our beliefs because they are more observable so people won’t recognize our hypocrisy. This can be used in apologetics to show that a person’s moral concerns (environmentalism, politics, human rights, etc.) do not align with their beliefs because there is no objective morality without God.

– Same or nearly the same as

– Related to

Commitment Bias – The tendency to stick with what we’re already doing or already believe even when new evidence suggests we should change. Anyone with a firm commitment to their current beliefs about God is susceptible to this.

– Same or nearly the same as escalation of commitment, hasty generalization (fallacy), premature cognitive commitment, sunk cost fallacy.

– Related to appeal to authority, backfire effect, foot-in-the-door technique, self-herding, status-quo bias

Compensatory Control – When we lose control in one situation or domain, we try to compensate by gaining control in another area. During an election year when there is political uncertainty, religious people tend to view God as being more in control than during non-election years. We gain compensatory control through work, routine, parenting, and many other domains.

– Same or nearly the same as

– Related to attachment

Confirmation Bias – This is has become a fairly broad term to describe any bias, action, or thing that helps us confirm what we already believe. It can take the form of looking only for confirmatory evidence (as opposed to evidence that potentially disproves our view), forgetting or ignoring evidence that doesn’t support our view, or interpreting evidence in a twisted way to fit our view.

– Same or nearly the same as belief bias, belief perseverance, myside bias

– Related to the availability heuristic, backfire effect, cherry-picking (fallacy), and pretty much everything else.

Conformity Bias – This is the tendency we have to conform to what other people think, say, or do. We are social creatures and most people don’t like going against the crowd. People may conform so that they do not appear to be different or because they might doubt themselves and think that others are right. In situations where there is strong pressure to conform, just one other person who dissents is enough to strengthen anyone else who might be doubting the group. So if you are in a setting where people are bashing Christianity, you can be the voice of dissent (respectfully) which can give strength to others who might have the same convictions but lack the confidence to say something. Likewise, if you present a good case to several people that Christianity is true, but one non-believer objects (even if the objections are really bad), that will likely strengthen others in their unbelief.

– Same or nearly the same as an appeal to the majority, bystander effect, herding

– Related to agreeableness, an appeal to authority, liking, in-group/out-group bias, social proof, groupthink, reactance (opposite)

Context – We all recognize when a Bible verse is taken out of context, but we often don’t realize that context shapes our every thought. Our culture, the weather, the people we’re with, the song on the radio, how much money we make, and everything else you can think of is a part of the context that shapes our thoughts. The effect can be so strong that even some optical illusions are culturally dependent. Obviously, not everything has an effect at all times, and not always to the same degree, but there’s always the chance that it might. For instance, if you just listened to an annoying song on the radio, you might be more likely to reject what I say here than had you listened to nothing or a song you like. The more we recognize the potential that contextual factors can influence our thoughts about something, the more open we should be to other views and the better we should become at discerning truth.

– Same or nearly the same as

– Related to conformity, authority, in-group/out-group bias, social proof, liking, empathy.

Contrast Effect – The tendency to judge something in comparison to something that came immediately before it. If you give an argument or a presentation after someone else, the quality of what you say will be judged in comparison to the person who spoke before you. This can help and hurt in apologetics depending on the person who went before you. This can apply to the quality of your videos, the design of your website, interviews, in-person or online conversations, and just about anywhere else.

– Same or nearly the same as

– Related to anchoring, arbitrary coherence

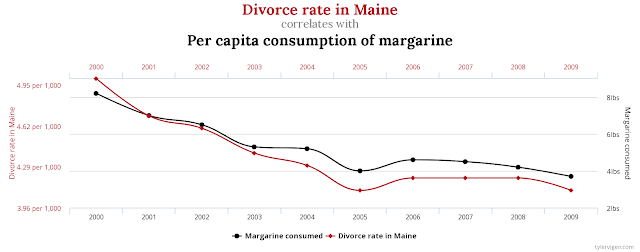

Correlation/Causation (False Cause Fallacy) – A lot of things are correlated with each other, but that doesn’t mean that one caused the other. It could just be a coincidence, they could both be caused by a third factor, or the causation could be in the opposite direction than proposed (in cases where there is not a logically necessary order). Here’s a little science secret for you…a lot of variables have very very small correlations, but almost no two variables have an exactly zero correlation. Here’s another one, let’s say you find two variables that have zero correlation with each other, if you test the correlation in enough samples, there will be some that show up with a correlation. That’s how sampling works. If I flip a coin 10,000 times and took a random sample of some of the flips, I would occasionally get samples that are not 50/50 even if the overall percentage is 50/50. This is why we should avoid drawing conclusions based on small samples or anecdotal evidence whenever we can. Scientific studies control for this and rule out chance and alternative explanations but your neighbor selling essential oils does not. This probably the single most important error in reasoning that we should constantly consider.

– Same or nearly the same as attribution errors, the placebo effect

– Related to superstition, classical conditioning, operant conditioning, confirmation bias, belief perseverance, data fishing

| For more weird and spurious correlations, go to https://www.tylervigen.com/spurious-correlationshttps://www.youtube.com/embed/gxSUqr3ouYA |

Correspondence Bias – This is very close to the fundamental attribution error (FAE). In FAE, we have a tendency to attribute situational factors to the person (thinking the guy who cut me off is a jerk rather than avoiding an object in the road) whereas correspondence bias is when there is a known situational constraint, a person is still likely to attribute the constraint to the person. The simplest example is if I am assigned to a view to defend in a debate competition, people will likely think that I actually hold the view I am defending even though it was randomly assigned. I see this in religious dialogue when someone corrects a bad argument for a view both people hold, then people assume the person correcting the view holds the opposing view.

– Same or nearly the same as fundamental attribution error (FAE)

– Related to correlation/causation, false cause fallacy, attribution errors, reactance, framing,

hasty generalization (fallacy), self-serving bias

Decision Fatigue – As we make more and more choices throughout the day, we become more mentally fatigued and less willing to put in the cognitive effort to make careful decisions. Whether this effect exists is highly debated. A recent paper suggests it does exist, just not as broadly as originally thought. In apologetics, this may come into play if you ask too many hard questions of someone. They may just get tired of answering and stop trying, in which case they may just leave, resort to name-calling, or answer without thinking (see other biases).

– Same or nearly the same as ego depletion

– Related to

Decoy Effect – When there are two competitive options, the decoy option is like one of them but less desirable, making the one it is like seem best. For instance, if I am selling you a burger with fries for $5 and a chicken sandwich with fries for $5, I can add a decoy to make one sell better than the other. If I have a bunch of burgers about to go bad, I can give the option for a burger only for $4.50, making the burger with fries seem most desirable. I suspect this might be part of why some people are spiritual but not religious. They are essentially choosing between to choose between atheism, religion without rules, and religion with rules, and for many people, organized religion serves as the decoy to nudge people towards spiritualism rather than atheism. I should note that this is just a hypothesis of mine or a potential application of this effect.

– Same or nearly the same as

– Related to anchoring, dilution effect

Dilution Effect – This is the tendency for people to use irrelevant information to make a decision. In other words, the relative information is diluted by irrelevant information. Technically, it refers to the use of nondiagnostic information (irrelevant) over diagnostic information (relevant). In apologetics, this comes into play when a person rejects what you say because of how you say it or because they don’t like you. This is how people work and so the best thing to do is to try to align the relevant and irrelevant information for your position.

– Same or nearly the same as attribution errors

– Related to liking, decoy effect, appeal to authority, confirmation bias, belief perseverance, Forer effect

Disgust – Our emotions help us make decisions very quickly and disgust might be the most powerful one, especially with moral reasoning. If we find something disgusting at any level, there’s a really strong chance we’ll say it’s wrong even though we can’t explain why it’s wrong. Even if we are in the presence of something that disgusts us, we are less likely to accept something as true.

– Same or nearly the same as

– Related to the affect heuristic, cognitive style, appeal to emotions.

Drop-in-the-Bucket Effect – The tendency to do nothing when our resources cannot make a significant impact on fixing a problem. I sometimes fail to use this when I talk about adoption. I cite the vast numbers of kids who need help, which is a problem no single person can fix, and so people aren’t motivated to get involved. If I focused more on individual children who need help, people would be more likely to be moved and do something to help that child. In apologetics, this is important to remember when you talk about moral issues. People will be much more concerned if there is an identifiable victim.

– Same or nearly the same as identifiable victim effect

– Related to vividness, vagueness

Dunning-Kruger Effect – My favorite bias because I think it explains so much of the world. This is the tendency for people with minimal knowledge or experience in an area to have extremely high confidence in their ability in that area. As they gain genuine expertise, their confidence dips down before starting to climb again. This is apologetics. Almost everyone thinks they are an expert on religion and science so when you try to have an apologetics conversation, they are unwilling to listen or consider what is said because they view themselves as the expert. The original paper for this is called “Unskilled and Unaware.”

– Same or nearly the same as

– Related to humility (opposite), straw-man (fallacy),

Ego Depletion – See the comment above for decision fatigue. They’re the same. The only possible difference is that ego depletion is stated in terms of willpower and compares it to a muscle that can be fatigued in the short-term but can be trained to grow stronger over time. The issue with this effect is that it doesn’t always show up when expected, which made people say it’s not a real effect. The research shows that essentially it can easily be overcome, so if that’s the case, is it a real thing. The solution seems to be that it affects whether we decide to put forth cognitive effort for a decision. If we do put in the effort, there’s no effect, but if we decide not to put in effort, we become very prone to any number of other biases listed here.

– Same or nearly the same as decision fatigue

– Related to

Egocentric Bias – This is the tendency to rely heavily on our own perspective without considering evidence from other people or sources.

– Same or nearly the same as over-confidence

– Related to self-serving bias, the false-consensus effect. the Dunning-Kruger effect, pride, confirmation bias, Hyperactive Agency Detection Device (HADD)

Enactment Effect – When we perform (enact) a task, we are more likely to remember it than things we don’t perform. If people better remember what they do, than the things they do are going to be more influential on their decisions and what they believe is true. Although it’s not strictly the same thing, experience works in a similar way. Ultimately, things people have not done or experienced they are going to be less likely to remember and believe as true.

– Same or nearly as same as

– Related to the availability heuristic, endowment effect, generation effect, self-reference effect, mere exposure effectEndowment Effect – The tendency to overvalue something we own simply because it’s ours. Our stuff has memories and emotions attached to it which other people don’t see or value. The basic idea seems to apply to worldviews, religious practices, personal sins, and social groups too. These things are ours and are part of us and we don’t want to give them up easily.

– Same or nearly the same as mere ownership effect.

– Related to commitment bias

False Consensus Effect – The tendency to think something is more normal than it really is. The classic example is premarital sex in high school. People, especially high school students, think “everyone is doing it,” but the research shows that more than half of high school students are still virgins when they graduate. The most prevalent example for apologetics is the tendency people have to think scientists or intellectuals are more atheistic than they really are.

– Same or nearly the same as

– Related to the availability heuristic, appeal to the majority (fallacy)

False Memories – People tend to think that we remember things, especially important things, exactly as they happen, but that’s just not the case. We often misremember and our memories change over time, particularly with the peripheral details that aren’t really relevant to the overall point. This is even the case when we remember things very clearly such as 9/11. This is one of the best challenges to the resurrection, that by the time the story of the resurrection was written down, it had already changed due to false memories or imagination inflation. The problem with this theory is that it doesn’t match the way in which our memories change and cultural factors. The resurrection occurred in an oral culture that prized memory and storytelling, so what really happened would have been remembered just fine. We tend to mix up small details, such as the day of the crucifixion, not the main event of a story, so it’s just not plausible based on memory research that the resurrection is the result of memory failure.

– Same or nearly the same as

– Related to Mandela effect, misinformation effect, mere exposure, vividness/vagueness, imagination inflation, confirmation bias, critical lure, deja vu, flashbulb memories

Familiarity – We like things that are familiar. We are more likely to choose familiar things and consider familiar statements as true. I think this is a major contributing reason why apologetics gets a bad wrap by many inside and outside the church: it’s unfamiliar to people and before they have the time to digest the concept of evidence for faith, they’re bombarded with arguments and it leaves them with a bad feeling.

– Same or nearly the same as the mere exposure effect, the availability heuristic

– Related to optimal distinctiveness

First Impressions – We form unconscious impressions about people almost immediately, and they’re often based on unreliable information such as their posture, tone of voice, how they look in the lighting of the room, their physical appearance, their clothes, and so on. Not only that, but these first impressions are very hard to change. This is why it’s important to always be prepared to give an apologetic (1 Peter 3:15) both mentally and physically. We can’t always be ready for a positive first impression with everyone, but there are keep moments when we can do better or have our guard up. For instance, I dress just a little bit nicer the first day I teach a new class each semester and start the class in a positive way so my students have a good first impression and therefore, are more likely to participate in class and learn better.

– Same or nearly the same as

– Related to intuition, thinking style, liking, aesthetics

Focusing Effect – The tendency for people to focus on a small detail or one aspect of something instead of the overall picture. In common words, it’s losing the forest for the trees. This happens in apologetics when people get hung up on details that are often irrelevant or they are unwilling to move beyond a certain issue. For instance, a skeptic may focus so heavily on evil that they are unwilling to recognize the broader point that there is no such thing as evil without a moral lawgiver or that there are other arguments that show God exists.

– Same or nearly the same as the availability heuristic, cherry-picking (fallacy)

– Related to affect heuristic, confirmation bias, red herring (fallacy)

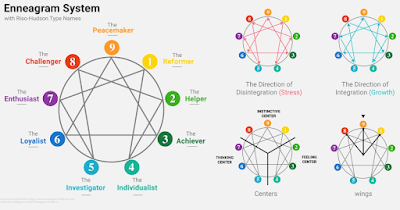

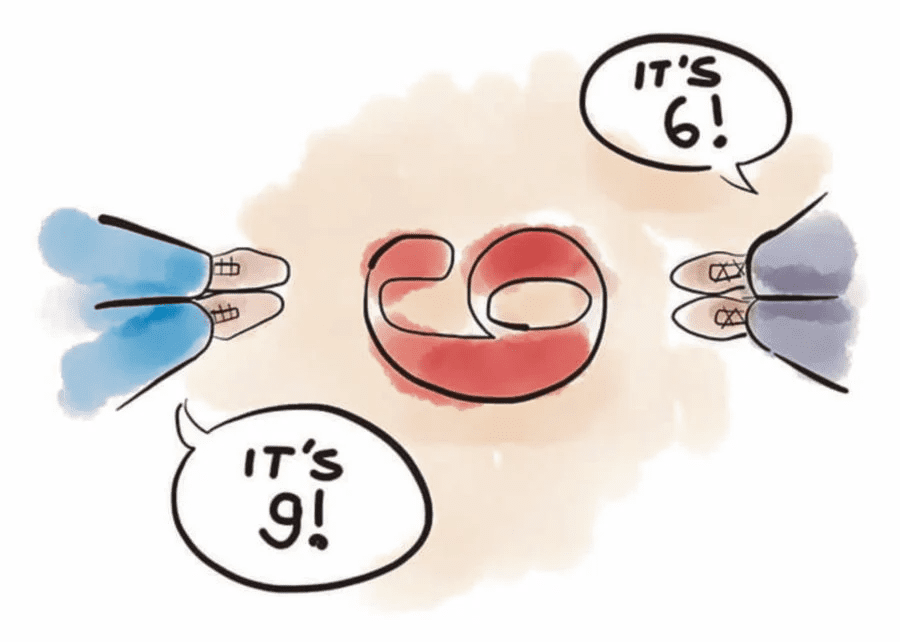

Forer Effect – The tendency for people to accept very broad or generalized statements about their personality as being uniquely true of them as opposed to recognizing they are largely true of most people. This basically explains the current trendiness of the enneagram, even though it is no scientifically valid. This may play a role in apologetics because people might be susceptible to view themselves in a way that could be beneficial or harmful for apologetics conversations. Trying to prime people to view themselves as rational, careful thinkers, respectful people, and so on, can help set up conversations so they are more effective.

– Same or nearly the same as the Barnum effect

– Related to the availability heuristic, confirmation bias, self-serving bias

Framing Effect – The way something is presented, or framed, can affect our conclusions about it. For instance, 99% effective sounds better than saying only fails 1% of the time. In one of my presentations, I show a clip from Brain Games where a cop asks witnesses how fast a car was going when it bumped/smashed into the other vehicle. By changing just one word, witnesses report drastically different speeds. In apologetics, our words matter. When we frame another worldview as ridiculous, those who agree with us and some in the middle will likely find it very convincing; however, unbelievers will feel as though we’re not honestly representing their view and disregard what we say. Another example is how we present Christianity. Do we present it in a positive light so people want to follow it or are we simply known for all the things we’re against? People are more prone to accept something, or at least listen when it is presented in a mostly positive way (not to say you can’t or shouldn’t mention the struggles of being a Christian).

– Same or nearly the same as

– Related to affect heuristic, appeal to emotions (fallacy), arbitrary coherence, fundamental attribution error (FAE)

Functional Fixedness – This is the tendency for people to view something only for it’s intended purposes, preventing us from seeing alternative uses. The ability to break this pattern is what made Macgyver popular. In other words, this bias is thinking inside the box, so the antidote (as if it’s just that easy) is to think outside the box. In apologetics, I find people sometimes have a fixed view of what Christianity is or what their identity is (“I’m a doctor and doctors aren’t religious”) which prevents them from seeing Jesus. If you notice this might be an issue, it’s easy to overcome as long as you don’t point it out in a condescending way.

– Same or nearly the same as

– Related to the availability heuristic, creativity, confirmation bias

Fundamental Attribution Error (FAE) – This is when we make an error in attributing something to someone or something. Usually, it’s used to refer to blaming people (personal attribution) for things that were not within their control, or not completely within their control, and then we often associate the act with their character. If you cut someone off in traffic, even if it was an emergency or they unknowingly swerved into your lane, they will likely blame you for it and if you have a Jesus sticker on your car, they’ll pass that judgment onto Him. Similarly, if you make a mistake about a fact (or they think you make a mistake), they will attribute that to your character and probably your intelligence. This is why it’s extremely important to be careful with our words, fact-check everything, and speak to others with grace.

– Same or nearly the same as correspondence bias

– Related to false cause fallacy (aka causal fallacy), confirmation bias, hasty generalization (fallacy), self-serving bias

Generation Effect – Information that we come up with ourselves is more likely to be remembered than information from others.

– Same or nearly as same as

– Related to the endowment effect, enactment effect, self-reference effect, mere ownership effect, Ikea effect, not-invented-here (NIH) effect

Group Polarization – The tendency for the views of two groups to move further apart after discussing the topic. The obvious example is politics. Let’s say a group of democrats and republicans have slightly different views on a topic when they start a conversation about it. After the conversation, they will likely move further apart. This happens for a variety of reasons, some rational and some not. Talking about the issue may help them think about it more, helping them realize their previous view was inconsistent or poorly thought out. However, it may also be due to knee-jerk reactions against the other group, an unwillingness to compromise and seem weak, following a charismatic leader, or many other reasons. In apologetics, this can happen in group discussions between Christians and other groups or during debates. To overcome this, it’s important to be respectful of others and build relationships so they don’t view you as an enemy who needs to be defeated. It’s very hard for someone to agree with a person they view as an enemy, even when it’s common sense. We have an automatic reaction to disagree with enemies or people we don’t like.

– Same or nearly the same as the backfire effect

– Related to affect heuristic, commitment bias, conformity, groupthink, ingroup/outgroup bias, liking, obedience to authority

Groupthink – When groups have a strong desire to conform or be unified, they have a tendency to accept ideas too quickly and without critical though, leading to bad decisions. This can also happen when the group leader or the environment punishes dissent. This is different than ingroup bias or group polarization in the sense that it stems from a desire within the group to get along and an unwillingness to risk the consequences of dissent (notice how I didn’t name specific errors I think many Christians make theologically 😉). This is hard to get around in social media because if someone does speak out against their side, they are criticized by both sides. If they see someone else do it, they often don’t want to stick their neck out in support so they might passively like something or just refuse to comment about it.

– Same or nearly the same as conformity

– Related to commitment bias, false-consensus effect, hasty generalization (fallacy), in-group/out-group bias

Halo Effect – This is the tendency for us to globalize a positive attribute of a person from one domain to another. For instance, if someone is physically attractive (or any other noticeable positive attribute), we’re more likely to rate them as more intelligent, more competent, more trustworthy, and so on. While this effect solely focuses on positive attributes, the same applies to negatives so that if a negative attribute stands out to someone, they are more likely to apply that to us in other domains too. This is why it’s extremely important in apologetics and evangelism to make good first impressions with people, to speak respectfully, dress and look respectable (not to be confused with being vain), be kind, and so on. People are much more likely to listen to apologetic arguments or the gospel if something about us (or many things) stands out as being very positive.

– Same or nearly the same as

– Related to affect bias, availability heuristic, Dunning-Kruger effect, first impressions, hasty generalization (fallacy), liking, representativeness heuristic

Hawthorne Effect – This is when people change their behavior when they’re aware of being watched or think they’re being watched. It’s obvious that this happens, at least to some degree, but people sometimes underestimate how big the effect is and how easy it is to invoke it. Some studies have found that simply putting a picture of a face in certain places can get people to act better, although the effectiveness of this small of an intervention is debated. This is seen in public conversations such as on social media or other public venues because people are more likely to stick with their group’s views on a topic rather than seriously consider other views for fear of being condemned by their group.

– Same or nearly the same as

– Related to group polarization, ingroup/outgroup bias, self-serving bias

Hedonic Treadmill/Adaptation – The tendency for people to adapt to things that are enjoyable so that it becomes their new expected standard. For instance, if you won the lottery, you would be ecstatic but you would slowly return to your previous levels of happiness, and worse, expect your quality of life to always remain the same so that you would be disappointed getting less than you had previously. If you buy a nice car, you will get used to the comforts and advantages of it so that when it comes time for a new car, you will expect the same or better, even if you don’t really need all the luxuries. The application is more theological than apologetics. When we become accustomed to the comforts of American life, we tend to be calloused toward people around the world who aren’t so well-off and we become unwilling to make sacrifices in our life for them. This affects how people view us when we do apologetics, but also the amount of time we spend studying or doing apologetics (or Bible study, prayer, etc.). When we become accustomed to Netflix xx hours per week, it’s hard for us to give that up so that we can read, do evangelism, serve the poor, etc. We then rationalize that we somehow deserve such rest because we work so hard at other times (we should indeed rest, but we don’t need nearly as much as the American lifestyle affords us).

– Same or nearly the same as

– Related to anchoring, halo effect, identifiable victim bias, foot-in-the-door technique, routinization, normalization, desensitization.

Herding – The tendency to follow the crowd as if we are a herd. This is a very useful heuristic, especially in unfamiliar places (e.g. traveling to a new country), but it can often lead to false conclusions. Many people have false views about Christianity because of this. They get their theology from popular media sources, leading them to think Christianity is intellectually bankrupt and faith is blind, and so they just go along with the crowd. This especially relates to sexuality and gender.

– Same or nearly the same as the appeal the majority, bandwagon fallacy, false-consensus effect

– Related to in-group/out-group bias, self-herding

Hindsight Bias – The tendency to look at events from the past as having been obviously predictable. In other words, we look at past things with blinders on due to changes in culture or increased knowledge about something. We look at slavery as wrong today, and rightfully so, but because it’s so culturally ingrained in us, skeptics sometimes have a hard time understanding slavery that is discussed in the Bible.

– Same or nearly the same as

– Related to affect bias, availability heuristic

Hot-Hand Fallacy – This is often discussed in terms of basketball, which is how it was discovered. Researchers found that when a person is perceived to be on a hot streak and observers think that person is more likely to make the next shot; however, the data shows this is not the case. The relevance to this in apologetics is that our immediate intuitions are not always correct. When looking at rates of abortion, effects of gun control, crime and religiosity, and so on, we can’t just cite a statistic and give a simple explanation (this goes for people on all sides). Sometimes the obvious conclusion is correct, but we need to look around for other data and the best explanation for something.

– Same or nearly the same as apophenia, regression to the mean.

– Related to blindspot bias

Hyperactive Agency Detection Device (HADD) – This is our hyperactive tendency to attribute intentionality to agents or random events. Some have proposed this as the foundational reason people believe in God. The research shows that Christians aren’t necessarily more likely to attribute agency to non-agents, but people with New Age types of beliefs are. Christians need to be able to respond to this objection regarding religious belief and at the same time, they also should be more careful when attributing random events to supernatural beings (God, angels, satan, and so on).

– Same or nearly the same as apophenia, just world beliefs, correlation/causation, pareidolia, false cause fallacy

– Related to conditioning, attribution errors, fundamental attribution error (FAE)

Identifiable Victim Effect – The tendency to be more compassionate toward a single person in need rather than a large group of people. Even though it seems like we should be more heart-broken over a million starving people than just one, the research shows we are more likely to act and give more for a single person than for a group. This is why many charities will feature a single person in need rather than a whole group. For apologetics, talking about the 120+ people killed by atheists in the 20th century is less powerful than focusing on a single victim in greater detail. Ideally, both points would be made for the greatest impact, although sometimes combining reasons increases the probability that the backfire effect will happen.

– Same or nearly as

– Related to drop-in-the-bucket effect, vividness, vagueness

Ikea Effect – When we place more value or importance on something that we build. In apologetics, if you tell someone the evidence for Christianity and give them the answer, they are likely to feel like your answer is not as good as theirs because they did not come up with it, and therefore, they will resist you. A better approach might be to tell people of certain facts or create hypotheticals based on the facts and then ask them to come to a conclusion based on those facts.

– Same or nearly the same as the endowment effect, mere ownership effect, not-invented-here (NIH) effect

– Related to the genetic fallacy

The Illusion of Control – The tendency to overestimate our ability to control things. This affects Christians who might think they have more control over another person’s beliefs than they actually do. This seems to part of the equation for people who want to push for government laws restricting behaviors that do not align with Christianity with the assumption that it will be more effective than it actually is. This is not saying there isn’t a place for laws restricting certain behaviors, but it’s the overestimate of the effectiveness of these laws that is the bias.

– Same or nearly the same as

– Related to the apophenia, compensatory control, gambler’s fallacy, superstition,

Illusory Truth Effect – The tendency to believe false information is true after hearing it over and over again. Essentially, it’s not the correctness that is remembered, but the content, so when people recall it, they remember it as true, unless of course they explicitly recognized it as false and argued against it. The main takeaway for apologetics is that people are likely to reject apologetic arguments when they’re new to them. This means we don’t need to be pushy or overbearing with people. We can and should take the long view and give them a little something to chew on time and time again. The goal isn’t to get them to believe false information, but to help remove an emotional barrier to something that seems new and strange.

– Same or nearly the same as mere exposure effect

– Related to the appeal to the majority, the false-consensus effect

Imagination Inflation – When we imagine something, we are more likely to remember that thing as something that actually happened. This is a legitimate objection for atheists to the resurrection that needs to be seriously dealt with by believers. However, the mechanisms of this effect are not nearly powerful to explain the resurrection because it would need to be argued that the resurrection was remembered correctly as not happening, but then imagined by someone (presumably multiple people), then that person or group of people didn’t discuss the resurrection idea they concocted for a long period of time, but when they did finally recall it, they remembered what they imagined rather than what really happened.

– Same or nearly the same as

– Related to false memories

Inattentional Blindness or Selective Attention – Strictly speaking, this is more of a perceptual error when we are so fixated on one thing, we miss surrounding cues. If you’ve ever seen the gorilla basketball video, that’s an example of this. However, the same general thing occurs when we are so sure we are correct about something that we just blatantly miss or don’t remember things that oppose our view. This is likely why atheists so often use incorrect definitions of faith or repeat the same misunderstandings about the kalam (e.g. who created God) even after they’ve been corrected. The correction just doesn’t register with them because they’re so sure they’re right. On the other hand, I see apologetics get so fixated on pedantic details of an objection to Christianity and lose the whole point of what the other person was saying.

– Same or nearly the same as

– Related to the availability heuristic, belief bias, confirmation bias

Individualism – This is more of a cultural or personality factor, but it definitely biases our decision-making. For people in individualistic cultures or people high on an individualistic scale (similar to reactance), anything that appears to violate their personal freedom will be viewed negatively. Politically, this correlates with libertarian and conservative views, which is where I get the sense that many apologists align. This should cause some apologists to question whether they’ve really based some of their theological and political views on Jesus or if it’s more based on their desire for individualism. I’m not saying their views are wrong; only that they should be carefully scrutinized. In apologetics, this is often the underlying reason people so strongly revolt against God’s moral standard, because they don’t want to be told what to do, even by an all-knowing, all-loving God.

– Same and nearly the same as reactance

– Related to reactance conformity collectivism

Inequality – We actually think in different ways by prioritizing different things when we feel unequal to others, regardless of whether we really are unequal or are well-off. Money is the most obvious and most studied domain for how inequality affects reasoning but it’s likely not limited to money. Higher levels of inequality (or feeling higher levels of inequality) lead people to prioritize short-term over long-term outcomes in their decisions or to rely more on intuition and emotion for their decisions rather than higher-order reasoning ability. Think about how this might affect someone when they feel that their discussion partner is condescending to them or far more knowledgeable in an area. We want to think they should give in and admit they’re wrong, but in most cases, they’re likely going to stick stronger to their current view.

– Same or nearly the same as

– Related to the time perspective, money, backfire effect

In-group/Out-group Biases – These are two different biases, but they’re often used to describe the same thing and used interchangeably. The reason is that they’re just two sides of the same coin. We are biased in favor of our own group and against other groups. When someone from our own group does something good, we apply it generally to the group as the norm and claim it as an example of the individual’s character or competence. When someone from the ingroup does something bad, we rationalize it or we blame it on the individual. The opposite happens with the outgroup. When a member of the outgroup does something good, we apply it only to the individual as an exception to the norm or we try to explain it away as being a product the situation or not all that good after all. When someone from the outgroup does something bad, we apply it to the whole group and view it as the norm. Apologetics is a quintessential example of ingroup/outgroup behaviors. People on all sides are guilty of jumping on the bandwagon of bad arguments and rationalizing. About the only thing you can do is to be aware of this bias so you can try to avoid falling into it yourself and work on building relationships with people in the outgroups so they don’t view you as an adversary.

– Same or nearly the same as self-serving bias (but applied to groups)

– Related to the appeal to the bandwagon effect, fundamental attribution error, liking, the majority (fallacy)

Intelligence – We highly prize intelligence in our culture and most of us probably think that increasing intelligence or education is the key to overcoming bias. However, the research doesn’t support this. While intelligence and education can help us overcome some biases, it increases our proneness to other biases. There are likely several reasons for this, the most obvious being that smarter people are more likely to think they’re right already and therefore, less likely to rethink their position when they encounter other views or new evidence.

– Same or nearly the same as

– Related to the belief bias, confirmation bias, personality, stereotyping, pattern recognition or apophenia,

Just World Beliefs – On one level, we know that sometimes bad things happen to good people and good things happen to bad people. However, at another level, we tend to act and think as though people always get what they deserve, whether God, karma, the government, or some other force is ensuring the world is just.

– Same or nearly the same as

– Related to compensatory control, the illusion of control, HADD

Language – Language has a reciprocal relationship with our thoughts and actions. They both affect each other in an endless cycle. The words we choose to use, the words we hear or read, and the construction of the language(s) we speak all affect the way we think and act. We like to think that we see the world objectively, at least part of the way we see the world is dependent on the language we speak (see context, egocentric bias, and time perspective). One famous study asked people how fast a car was going when it bumped or smashed into another vehicle and the change of that one single word affected the estimates by about 20 mph. Words have meaning and they can have a huge effect on the way we see others and the way they see us, and in turn, how they see Christ.

– Same or nearly the same as

– Related to context, egocentric bias, and time perspective

Liking – When we like someone, we’re much more likely to listen to them, be persuaded by them, give them the benefit of the doubt, and so on. If you want to be a more effective apologist, be kind and respectful of others and they will be much more likely to listen.

– Same or nearly the same as

– Related to affect bias, the halo effect, ingroup/outgroup biases

Loneliness – We all know what loneliness is, but we’re not always aware of its effects or the extent to which it can affect people. You can be lonely when you’re with others (even close family and friends) or when you’re alone. When people are lonely, they’re at very high risk for depression, but they also have a higher risk for death, and not just through suicide (stroke, heart attack, car accidents, etc.). People who are lonely are also in need of close friendships and will sometimes do just about anything to not feel lonely anymore, including changing their religious beliefs to whatever the people who accept them believe, even if that group is a cult.

– Same or nearly the same as

– Related to depression, affect heuristic, appeal to emotion, liking

Loss Aversion – The tendency for potential losses to play a bigger role in our decisions than potential gains. This is likely why people are more willing to settle with what they have than risk losing it for something better. Worldviews are an excellent example. If a person has a worldview that seems to work, and they have a group of friends who share that worldview, they are not easily going to risk giving that up for Christianity. They will fight to show that their worldview is better and even if you can show Christianity is a better worldview, they may not be willing to accept it.

– Same or nearly the same as negativity bias

– Related to the affect heuristic, availability heuristic, belief bias, confirmation bias, endowment effect, mere ownership effect, sunk cost fallacy, and status quo bias

Luck (Coincidence) – Sometimes coincidences happen and we get lucky. Sometimes we even get lucky a lot. We need to be careful not to attribute luck to other causal factors such as skill, God, karma, and so on.

– Same or nearly the same as

– Related to survivor bias, egocentric bias, self-serving bias, in-group/out-group bias, attribution errors, just world beliefs, HADD, superstition, false cause fallacy, correlation/causation

Mandela Effect – This is when someone misremembers something, such as Nelson Mandela dying (how the effect was named), and then a large number of people believe it. It’s spread to broad cultural norms and details of pop-culture. There’s actually several online tests you can take to demonstrate this effect and give you a better idea of what it is. Here’s just one of them. This is perhaps one of the strongest arguments against Christianity, specifically the resurrection, but critics don’t use it It’s better than the swoon theory, hallucination theory, and all other attempts to explain away the resurrection, but it still falls short. This effect can’t explain eye-witness accounts, the reports of Paul and the apostles performing miracles in the name of Jesus, and it doesn’t take into account the memory ability of people in the first century.

– Same or nearly the same as DRM procedure, false memories

– Related to imagination inflation, egocentric bias

Mere Exposure – This is the tendency to be more willing to accept things that we’ve been exposed to before. In other words, new things (like evidence for Christianity) are strange to us and seem unlikely to be true so we reject them. Don’t expect people, even other Christians, to accept the arguments for Christianity the first time they hear them. They’ll likely need several exposures to the idea of rational faith and evidence before they’ll be open to accepting it.

– Same or nearly the same as

– Related to optimal distinctiveness

Mere Ownership Effect – The tendency to overvalue items that we own. This is studied with physical objects by seeing how much people will buy and sell things for, but there’s no reason the same effect doesn’t apply to things like worldviews. This is likely one of the many reasons it’s hard for people to change their beliefs, even on small topics.

– Same or nearly the same as the endowment effect

– Related to Ikea effect, not-invented-here (NIH) effect, enactment effect

Misinformation Effect – This is when information after an event affects our memory of the event. The classic example of this effect is from a 1974 study that showed people a film of a car crash. Participants were asked how fast the car was going when it either collided, bumped, contacted, hit, or smashed into the other vehicle. This change of a single word affected their estimates of the car’s speed and one week later, those in the smashed condition were more likely to say they saw broken class. This is a potential argument against the resurrection, however, this effect cannot explain something as big as a person rising from the dead or the fact that the NT authors witnessed several other miracles and did miracles themselves.

– Same or nearly the same as

– Related to DRM procedure, false memories, imagination inflation, Mandela effect

Moral Licensing – This is the tendency we have to let ourselves off the hook for moral behaviors because of past or other moral behaviors. For instance, someone might justify littering because they recycle. Moral licensing doesn’t always happen and it’s hard to predict, but it does happen. Christians need to be particularly careful about this because prayer can be the ultimate moral license for inaction. We should pray continually, but prayer should not be a substitute for action and sacrificial love, even if we pray a lot.

– Same or nearly the same as

– Related to rationalization, cognitive dissonance

Myside Bias – The tendency to favor evidence and arguments that support what a person already believes. My favorite study on this asked participants to state whether deductive syllogisms were valid and they scored around 70%, but when asked to do the same for abortion syllogisms opposing their own view, they dropped to about 40%. When doing apologetics, you need to find ways to bring up and discuss topics in a safe and unemotional way so that people will be willing to think instead of rejecting it without much thought.

– Same or nearly the same as confirmation bias

– Related to belief bias, belief perseverance

Negativity Bias – Also known as the negativity effect, this is our tendency to focus on and remember negative things more than positive things, to more heavily weigh negative factors in decision making, or to perceive ambiguous cues as negative. In short, negative thoughts and experiences are very powerful in our lives and hard to overcome. This is why negative experiences in the church or with Christians is such a common factor among non-believers even though it is logically irrelevant to whether Christianity is true. Additionally, even if you speak to someone who has a negative view of Christianity, you can be present the gospel or an apologetic argument in a neutral or even somewhat positive way and they might interpret it negatively. This is just one reason why our tone, style, and words are so important when doing apologetics.

– Same or nearly the same as

– Related to in-group/out-group bias

Normalization – When something (usually something strange or rare) is repeatedly encountered and eventually is accepted or viewed as normal. We’ve seen this happen in society in several areas such as sexual ethics, gender, debt, divorce, and others. The notion that faith can be rational is super strange to many people and doesn’t fit in with their schema for religion. This is why I started Apologetics Awareness to help get people familiar with the idea that faith can be rational. The best way to use normalization to your advantage is to present the thing you want to be accepted in a positive and non-controversial way. For Apologetics Awareness, the focus isn’t on arguing with people or presenting arguments; it’s about letting people know there is evidence and you can be a thinker and a believer. It’s a subtle but important difference because consistent negative experiences will harden people to what you’re saying.

– Same or nearly the same as mere exposure effect, systematic desensitization, habituation

– Related to the availability heuristic, foot-in-the-door technique

Not-Invented-Here (NIH) Effect – This is when we reject an idea or undervalue something because we did not come up with it. Simply put, people don’t like to be wrong and don’t like it when someone else knows more than them. A great way to get people to think they’ve come up with an idea of their own is to ask leading questions, preferably about things they likely haven’t thought about before. This is different than trying to ask questions that trap a person in a corner, but with this effect, you want to use less direct questions and don’t answer the question yourself (or point out how their views are contradictory). This can also be used with the mere exposure effect because once people are aware of an idea, even if they can’t name it, their brain may unconsciously stumble upon it through repeated conversations.

– Same or nearly the same as the Ikea effect

– Related to mere exposure effect, self-serving bias, mere ownership effect, endowment effect.

Obedience – People are generally more obedient to authority figures than they would be otherwise. There are certainly many exceptions to this and other factors to consider so this one can be hard to use in practical situations. The best way to use this is to understand who you’re talking to and present yourself in a way that they would consider an authority. In most cases, this means dressing and speaking professionally, but with some people, it might mean presenting yourself as somewhat of a rebel. Additionally, citing people or institutions the other person considers authoritative can also be effective. If the person is an atheist, try to cite what other atheists have said that supports what you are trying to say.

– Same or nearly the same as conformity, expertise, appeal to authority (fallacy)

– Related to liking, reactance (opposite)

Openness (Personality) – Openness to experience is one of the five major personality factors that affect people and it plays an important role in decision making. People who are high in openness are likely to be more liberal and more likely to consider and accept alternative viewpoints, but they may be too comfortable with contradictions or ambiguity and unwilling to commit to a view. People low in openness are unlikely to even consider new evidence presented to them in the first place, and even if you can get them to consider it, actually changing views will be extremely hard for them.

– Same or nearly the same as

– Related to confirmation bias, belief perseverance

Operant Conditioning – This is just rewards and punishments. We don’t often think about how we interact with people in terms of rewards and punishments, but our brains process conversation cues in the same way. When you cut someone off when talking, criticize them or their view, or point out a logical fallacy, their brain processes that as though they are being punished for talking. There are two solutions for this: they will recognize they are wrong and change their view or they’ll just decide to stop talking to you, at least about religion. While both options are possible, it’s much more likely they’ll just stop talking to you. Instead of being critical, thank people for sharing, ask authentic questions, praise the positive things they say, and draw attention to areas of agreement instead of just focusing on disagreements.

– Related to classical conditioning, in-group/out-group bias, liking, negativity bias

Optimal Distinctiveness – We like to be unique but we also like to be part of the crowd. How do we balance these two common desires that most people have? We do it through optimal distinctiveness. For each person, there is some optimal level for how comfortable they feel being part of the crowd versus being distinct. Keeping this in mind, we can sometimes appeal to people’s desire to be distinct and sometimes appeal to their desire to be part of the crowd.

– Same or nearly the same as

– Related to mere exposure effect, cognitive ease, bandwagon effect, scarcity

Pareidolia – A specific type of apophenia (above) that involves seeing patterns in random visual or auditory stimuli. In other words, it’s seeing Jesus on a piece of toast or hearing a hidden message in songs that are played backward (excluding intentional exceptions for both of these 😀).

– Same or nearly the same as apophenia, agenticity, patternicity

– Related to

Placebo Effect – This is when something affects us simply because we believe it will affect us. The obvious example is when sugar pills (placebo) are given as medication for drug testing, the sugar pills actually improve people’s health; however, this effect is much broader than just medicine. Believers seems to be especially susceptible to this effect. The placebo effect can largely explain why things like amber beads, essential oils, and other homeopathic remedies seem effective (obviously with rare exceptions where clinical trials have shown them to be effective). The importance of understanding the placebo effect for apologetics is mostly for recognizing how non-believers might view us mistake the placebo effect for a real effect. If I am talking to a thoughtful atheist and I tell her that I was healed by essential oils, she will likely think it was really just the placebo effect and that I am too biased or ignorant to know better. The same is true for many claims people make about prayer. It’s extremely hard to convince people to change their views on something, but it’s even more difficult if they think you are unintelligent.

– Same or nearly the same as

– Related to attribution errors, base-rate fallacy, apophenia, superstition, classical conditioning, self-fulfilling prophecy

Positivity Bias – You might be thinking, hey, I thought we have a negativity bias, now you’re telling me theirs a positivity bias? This difference is who the bias is direct toward. People are more likely to expect positive things, have positive memories, or have positive evaluations about themselves or their group whereas the negativity bias applies to others and the out-group. To overcome this, you need to present arguments in a positive way, ask questions so people come up with the answer on their own, and praise them when possible.

– Same or nearly the same as self-serving bias,

– Related to confirmation bias, in-group/out-group bias, not-invented-here effect, liking.

Prejudice – This is when we are biased against a particular group. Obviously, this applies to race; however, research shows that people tend to be heavily biased against atheists and other religious groups regardless of race. Additionally, there’s prejudice can be explicit or implicit. Explicit is when someone admits (perhaps only privately) they are racist or against a group. Implicit bias is when someone is unaware that they are biased or prejudiced against someone. The degree to which the most common test for this, the Implicit Association Test (IAT), actually reveals implicit bias is debated, but regardless, it’s almost certain we have some level of prejudice against several out-groups. For apologetics, this means that people we talk to may have implicit biases against us that they are unaware of, but it also means we may have biases against other groups of people and these biases can prevent us both sides from listening to and understanding each other.

– Same or nearly the same as out-group bias

– Related to in-group bias (opposite), stereotypes, discrimination

Priming – Priming is when our brains are prepared (primed) to think a certain way or about a certain topic. If you’ve ever done the trick where you ask someone say ten 10 times then you ask them what soda cans are made out of, they are likely to say tin instead of aluminum because their brain is primed to think of things like ten and since tin sounds like ten and is a metal like aluminum, it pops into our minds right away. The importance of this for apologetics is understanding how our environment, single words, or just about anything else can prime the person we are talking to which will affect the way a person engages our arguments. We can prime them to be argumentative and intuitive, which would not be helpful, or we can prime them to be open and thoughtful.

– Same or nearly the same as

– Related to the availability heuristic, framing

Processing Style (Top-Down vs. Bottom-Up Processing) – We all process information simultaneously in a top-down and bottom-up fashion. Top-down is when we see the big picture and work down to the details (seeing the forest before the trees) and bottom-up is when we add details together to understand the whole (seeing individual trees before recognizing a forest). This isn’t really a bias, but it can influence our decisions by limiting the way we see something or by distracting us from the real question. If we’re debating whether a patch of trees is a forest, getting caught in a discussion about whether one single tree is really a bush is irrelevant to the larger picture and can distract us from finding a solution.

– Same or nearly the same as

– Related to framing, red herrings (fallacy), reductionism (philosophy)

Projection – We all have a tendency to assume that other people think or feel the same way we do. This is projection and it’s one of the few ideas Freud had that has stuck around. If we get heated in a conversation, we are likely to think the other person is heated too or that they are the only person who’s heated. This can taint the way we view an interaction with others or how they view it, which is all the more reason to avoid argumentation and making people angry. If you do that, even if you’re right, they will likely project their anger on you and view you as the belligerent person and disregard everything you said. People are much more likely to remember how you make them feel rather than the content of your arguments so as much as possible, avoid saying things that will anger people.

– Same or nearly the same as

– Related to self-serving bias

Psychological Distance – This is a term used to describe how close or far something is from us psychologically. A picture of a crime scene has a greater psychological distance than being at the crime scene. Telling a story about sheep that’s analogous to a real-world situation creates more psychological distance than calling someone out (like Nathan did with David in 2 Samuel 12). When we have psychological distance from something, we can think about it more rationally and carefully; however, it may also cause us to overlook important personal aspects of the topic. Additionally, when we have psychological distance from something, we are less likely to see the immediate application of it to our own lives and we can let our guard down so that we end up liking what is wrong. Media bombards us with messages throughout the day that are psychologically distant from us in our theology, but it ends up shaping our theological views. At the same time, creating psychological distance can also help people think through difficult topics.

– Same or nearly the same as

– Related to mere exposure effect, liking, appeal to emotion, affect bias,

Reactive Devaluation – We have a tendency to automatically react negatively toward beliefs, actions, or proposals from people or groups that we disagree with. This means that an atheist will be more likely to disagree with something a Christian says even if it is non-controversial, and similarly, Christians are likely to do the same with people of other worldviews. This is why sometimes it can be really hard to find common ground.

– Same or nearly the same as out-group bias, reactance

– Related to revenge,

Revenge – It may seem surprising, or not, that humans have a strong inclination to get revenge on others, so much so that people will often knowingly harm themselves in order to get revenge. This relates to our sense of justice or fairness, which is one of the primary factors that influence people’s moral choices. If you embarrass someone by proving them wrong, they are probably more likely to respond by seeking revenge rather than changing their view. In most cases, revenge be nothing more than passive-aggressive or subtle insults, but sometimes they are blatant and on rare occasions, they may be dangerous.

– Same or nearly the same as

– Related to backfire effect, forgiveness (opposite), reactance, retributive justice

Reactance – This is a personality factor and it is the tendency to react against someone, usually an authority figure, by doing the opposite of what they say. It’s often not a rational response, but people will justify their reactive actions rationally (at least in ways that sound rational). For instance, someone high in reactance might immediately decide not to wear a mask during the pandemic and only later will they come up with reasons to support this (and often they will be conspiracy theory types of explanations). In regard to religion, it seems like a lot of former Christians left the church because they didn’t like God, the Bible, or their church leaders telling them what to do and simply arguing with them about those reasons or the intellectual reasons they offer as post hoc justification will only convince them more that they are correct.

– Same or nearly the same as

– Related to authority, compliance, backfire effect, obedience, revenge, retributive justice

Regression to the Mean – This is a statistical phenomenon. Imagine you flip a coin a billion times. What will the result be for heads and tails? Likely it will be almost exactly 50%. However, if you look at a random set of flips within that billion, there might be instances of 10 or even 20 heads in a row. Our lives are composed of billions of moments and opportunities for apparently rare things to happen, so we need to be careful not to draw generalized conclusions from these statistically inevitable, but rare events. Believers are particularly guilty of this when they claim every coincidence in life is the active work of God. Non-believers may not know the term regression to the mean, but they’ll still view you and your claims as nonsensical. I’m not saying you shouldn’t recognize God in your life, but you should be aware of how others might view what you say.

– Same or nearly the same as apophenia, pareidolia

– Related to the base rate fallacy

Representativeness Heuristic – This is a mental shortcut that leads us to conclude that a specific example that matches our schema is more likely than a general example. Here’s the classis illustration of this heuristic. Linda is 31 years old, single, outspoken, and very bright. She majored in philosophy. As a student, she was deeply concerned with issues of discrimination and social justice, and also participated in anti-nuclear demonstrations. Which is more probable? a.) Linda is a bank teller or b.) Linda is a bank teller and is active in the feminist movement. Many people say “b” because Linda matches their schema for a feminist but “a” is the more likely since “a” can be correct without “b” being correct but it can’t be the other way around.

– Same or nearly the same as conjunction fallacy, stereotypes

– Related to the base-rate fallacy, hasty generalization (fallacy), schemas, archetypes, prejudice, in-group/out-group bias

Routinization or Foot-in-the-door – This is we agree to or accept something small, and that small thing becomes our new standard or expectation, and when something is added so we go along with that as well, and things continue to escalate. This is a classic sales tactic, but it also explains how people might do things that seem morally disgusting, even to the person who did it. In apologetics, this technique can be used to build bridges and rapport with them for having conversations. Start by finding places where you agree on things and then move slowly from there. In most cases, people will not be open to hearing what you say if you give them too much at once so using this technique can help you from overwhelming the other person.

– Same or nearly the same as habituation, escalation of commitment

– Related to the hedonic treadmill, systematic desensitization, normalization, mere exposure effect, anchoring, door-in-the-face, commitment bias, obedience, self-herding, optimal distinctiveness

Scarcity – We want to feel special so if something is rare, we feel special by having it. Essentially, this principle works by making people feel superior to others in some way. In terms of religious dialogue, people think they have the special, true knowledge of reality, which makes them feel special and they will not give it up easily. I’ve seen this work in all directions so don’t think you haven’t fallen victim to this type of thinking, even if it’s unconscious.

– Same or nearly the same as

– Related to unity and social proof (somewhat opposite), not-invented-here, loss aversion, in-group/out-group bias, optimal distinctiveness.

Schemas – If you’ve watched anything from Jordan Peterson you’ve probably heard him talk about Jung’s idea of an archetype. This is very similar. It’s a mental representation of something in an idealized form. This relates to decision-making because we have a very hard time understanding, remembering, and accepting things that don’t fit into our schemas. For instance, if someone’s schema for religious belief includes blind faith, they will likely have a very hard time understanding how evidence could even be applied to religious belief. They almost certainly won’t accept any evidence provided at that point either. They need time to come to terms with the new information and adjust their schema first (unfortunately, many people won’t adjust it and will just forget or ignore the new information).

– Same or nearly the same as (Jungian) archetypes, prototype

– Related to mere awareness effect, optimal distinctiveness